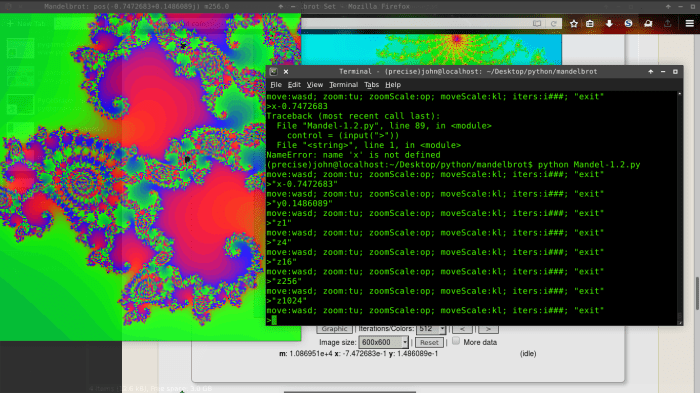

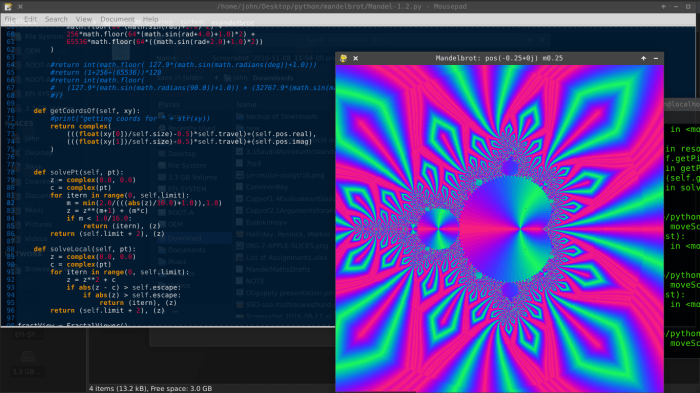

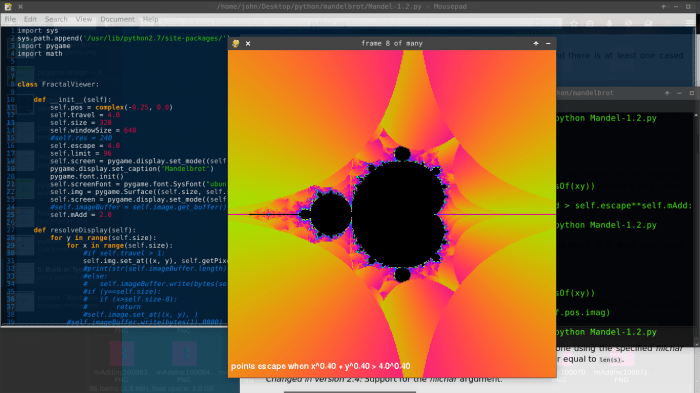

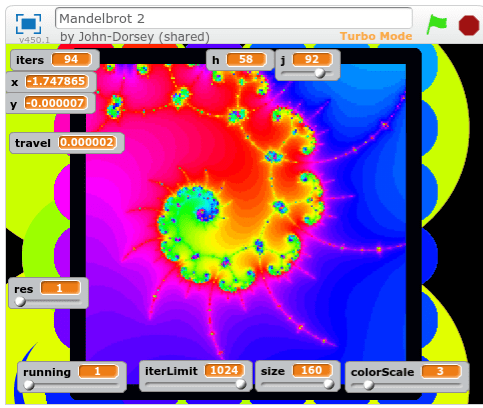

One day in the spring of 2016, I wasn’t able to make my internship, so I found myself in my actual Computer Programming II “cover class” that was displayed on my schedule. That day I was introduced to Python. I didn’t have the time to finish the customary Mandelbrot generator to put the new language through its paces. I didn’t know much about python by the end of the day either.

That summer, I had the great privilege of visiting Rose-Hulman for 2 1/2 weeks. I immediately joined computer programming, because I wanted to learn Python for real.

I was surprised to learn that python is MAGIC. This xkcd put it well:

During my too-short stay in paradise, I finally learned how to work in a group. We used Dropbox instead of Git, for some reason.

I had originally planned to work alone and create a simple lossy image format (which I’m still interested in doing) until our professor convinced me to work in a group instead. Something about not having enough time to complete such a large project going solo. At the time all I could hear was him asking me to aim lower, but I’m thankful that I chose the group project now. It probably presented greater challenges and taught me more anyway – I’m already onto lossy compression techniques. Game design? Other people? Conforming to someone else’s master plan? Not so much yet.

I’m only going to release the code I contributed at this time.

file stickmanFigure3.py:

#06.24.16

import pygame

from stickmanSprite6 import *

from pose import *

import animationLib3 as animLib

import animationUtility as animUtil

import time

class StickmanFigure:

def __init__(self, figureName = "Tom", expression = "Angry", scale = [1, 1], hasTail = True, drawMan = True, currentAnimName = "IDLE", mssg = ""):

self.figureName = figureName

self.message = mssg

self.currentAnimName = currentAnimName

self.contentSprite = StickmanSprite(figureName, expression, hasTail) #the main sprite

self.animFrame = 0 #the current frame of the animation

self.frame = 0 #the total number of frames ever

self.baseDuration = 12.0 # the number of frames which pass between each keyframe

self.currentAnim = animLib.getAnim(currentAnimName)

self.currentAnimLength = animUtil.frameCount(self.currentAnim, self.baseDuration)

self.drawMan = drawMan

self.tempDrawMan = True

self.scale = scale

def doAnimation(self, animationName):

self.animFrame = 0

self.currentAnimName = animationName

self.currentAnim = animLib.getAnim(self.currentAnimName)

def draw(self, target, coords, scale):

tailDirection = scale[0] / abs(scale[0])

scale = (abs(scale[0]), scale[1])

scale = self.scale

if self.drawMan:

if self.animFrame >= self.currentAnimLength:

self.doAnimation(self.currentAnimName)

if not self.contentSprite.editing_isEditing:

self.contentSprite.setPose(self.getPose())

self.animFrame += 1

self.frame += 1

return self.contentSprite.draw(target, coords, scale, tailDirection)

def getPose(self):

#print("StickmanFigure: StickmanFigure: getPose: calculating based on anim " + str(self.currentAnim))

return animUtil.terpAnim(self.currentAnim, self.baseDuration, self.animFrame)

#print("StickmanFigure: StickmanFigure: getPose: and now the animation is " + str(self.currentAnim))

#print("StickmanFigure: StickmanFigure: getPose: returning " + str(result))

description:

The StickmanFigure class is responsible for running requested animations and manipulating a StickmanSprite object. It is the highest level class I wrote, and can be implemented directly into any project that uses pygame.

file stickmanSprite6:

#06.24.16

import pygame

import os

from pose import *

import math

class StickmanSprite:

face = pygame.Surface((32,32))

def __init__(self, identity = "Tom", expression = "Angry", hasTail = True):

#identity ----------------------------------------------------------------

self.identity = identity #used in getting face image from resources

self.expression = expression #used in getting face image from resources

#pose data ---------------------------------------------------------------

self.basePose = [[35,100], [65,100], [50,50], [50,30], [35,60], [65,60]] #the default locations of all parts. poses are added to this.

self.currentPose = [[0,0], [0,0], [0,0], [0,0], [0,0], [0,0]] #the current pose, can be used to access the pose which is in use.

self.drawPose = [[0,0], [0,0], [0,0], [0,0], [0,0], [0,0]] #the pose which is editable and drawable (basePose + currentPose = drawPose)

self.drawPose = Pose.add(self.basePose, self.currentPose) #initialize drawPose

#configuration -----------------------------------------------------------

self.headResponse = 0.6 #effect of pose on head angle

self.hasTail =hasTail #enable/disable tail feature

self.tailWavelength = 32 #number of frames for a full cycle of tail wiggle amplitude

self.tailLength = 0.5 #shorten tail to the specified length without changing the wave

self.tailAmplitude = 0.01 #multiplier for tail wiggle amplitude

self.tailHeights = [0,10,18,24,26,24,18,10,0,-10,-18,-24,-26,-24,-18,-10] #approximation of a sine wave

self.foreground = [0,0,0] #foreground color

self.background = [220,220,220] #background color

#self.aa = True #enable/disable antialiasing. this is no longer available.

self.lineWidth = 3

#other --------------------------------------------------------------------

self.editing_isEditing = False #when True, the stickman can be editied by clicking and dragging parts, to get pose information

self.editing_clicked_left = False #whether the stickman is being clicked during editing

self.editing_clicked_right = False

self.timesDrawn = 0 #number of frames the stickman has been drawn in. used in tail calculations!

self.framePhase = 0 #cached tail wiggle amplitude for this frame (instead of recalculating for each pixel.) this is calculated at the start of draw()

self.face = pygame.image.load(self.identity + "_" + self.expression + ".png") #his face

#self.face = self.face.convert()

#self.face.set_alpha()

#self.face.set_colorkey([255,255,255])

#constants ----------------------------------------------------------------

self.PI = 3.141592653589793238462643383279502884197169399375

self.TAU = self.PI * 2

def setPose(self, newPose): #set the pose of the stickman

self.currentPose = newPose

self.drawPose = Pose.add(self.basePose, self.currentPose)

def draw(self, target, coords, scale, tailDirection = 1):

if self.editing_isEditing:

self.edit(coords, scale) #edit while drawing

self.timesDrawn += 1

#self.framePhase = float(math.sin((self.timesDrawn / self.tailWavelength) * math.pi * 2)) * 100000000000000000.0

self.framePhase = self.timesDrawn%self.tailWavelength - (self.tailWavelength/2)

#self.framePhase = self.timesDrawn%self.tailWavelength

#self.thisSurface.fill(self.background) #clear the main surface

pygame.draw.line(target, self.foreground, [self.drawPose[0][0]*scale[0]+coords[0],self.drawPose[0][1]*scale[1]+coords[1]], [self.drawPose[2][0]*scale[0]+coords[0],self.drawPose[2][1]*scale[1]+coords[1]], self.lineWidth) #draw left leg

pygame.draw.line(target, self.foreground, [self.drawPose[1][0]*scale[0]+coords[0],self.drawPose[1][1]*scale[1]+coords[1]], [self.drawPose[2][0]*scale[0]+coords[0],self.drawPose[2][1]*scale[1]+coords[1]], self.lineWidth) #draw right leg

pygame.draw.line(target, self.foreground, [self.drawPose[2][0]*scale[0]+coords[0],self.drawPose[2][1]*scale[1]+coords[1]], [self.drawPose[3][0]*scale[0]+coords[0],self.drawPose[3][1]*scale[1]+coords[1]], self.lineWidth) #draw torso

pygame.draw.line(target, self.foreground, [self.drawPose[4][0]*scale[0]+coords[0],self.drawPose[4][1]*scale[1]+coords[1]], [self.drawPose[3][0]*scale[0]+coords[0],self.drawPose[3][1]*scale[1]+coords[1]], self.lineWidth) #draw left arm

pygame.draw.line(target, self.foreground, [self.drawPose[5][0]*scale[0]+coords[0],self.drawPose[5][1]*scale[1]+coords[1]], [self.drawPose[3][0]*scale[0]+coords[0],self.drawPose[3][1]*scale[1]+coords[1]], self.lineWidth) #draw right arm

if self.hasTail:

self.drawTail(target, coords, scale, tailDirection) #draw tail

if scale == [.5,.5]:

stickmanHead = pygame.transform.rotate(self.face, Pose.getHeadRot(self.drawPose) * -self.headResponse)

stickmanHead = pygame.transform.scale(self.face, (int(64*scale[0]),int(128*scale[1])))

posx = 64*scale[0] - 145*scale[0]

posy = 64*scale[1] - 150*scale[1]

target.blit(stickmanHead, ((int(self.drawPose[3][0]+posx)+coords[0]),int((self.drawPose[3][1]+posy)+coords[1]))) #special_flags=pygame.BLEND_RGB_MULT

else:

stickmanHead = pygame.transform.rotate(self.face, Pose.getHeadRot(self.drawPose) * -self.headResponse)

stickmanHead = pygame.transform.scale(self.face, (int(64*scale[0]),int(128*scale[1])))

target.blit(stickmanHead, ((self.drawPose[3][0]-32)*scale[0]+coords[0],(self.drawPose[3][1]-60)*scale[1]+coords[1]))#target.blit(self.thisSurface, (0,0))

return stickmanHead

def drawTail(self, drawOnto, coords, scale, direction):

tailSpacing = self.tailLength * direction * 50 / len(self.tailHeights)

#print(str(tailSpacing))

for index in range(len(self.tailHeights)-1):

#print(str(index))

pygame.draw.line(drawOnto, self.foreground,

[(tailSpacing*(index+0) + self.drawPose[2][0])*scale[0] + coords[0], (self.drawPose[2][1] + self.tailHeights[index+0]*self.tailAmplitude*self.tailHeights[int(self.framePhase%len(self.tailHeights))])*scale[1] + coords[1]],

[(tailSpacing*(index+1) + self.drawPose[2][0])*scale[0] + coords[0], (self.drawPose[2][1] + self.tailHeights[index+1]*self.tailAmplitude*self.tailHeights[int(self.framePhase%len(self.tailHeights))])*scale[1] + coords[1]],

self.lineWidth)

def toggleEdit(self):

self.editing_isEditing = not self.editing_isEditing

if self.editing_isEditing:

print("left click to adjust nearest part, middle click to print pose")

def edit(self, coords, scale):

#print("editing stickman...")

relativePos = pygame.mouse.get_pos()

relativePos = [(relativePos[0]-coords[0])/scale[0],(relativePos[1]-coords[1])/scale[1]]

editingPoint = self.getClosest(relativePos)

if self.editing_clicked_left and (not pygame.mouse.get_pressed()[0]): #whenever the mouse button is released

self.reversePose() #determine the actual pose, which is the difference between the current pose and the base pose

if self.editing_clicked_right and (not pygame.mouse.get_pressed()[1]):

print(str(self.currentPose)) #print the current pose for use in an animation

self.editing_clicked_left = pygame.mouse.get_pressed()[0]

self.editing_clicked_right = pygame.mouse.get_pressed()[1]

if self.editing_clicked_left:

self.drawPose[editingPoint] = relativePos

#print(" Moving a point... clicked==" + str(clicked))

def getClosest(self, testPosition):

distance, index = min((dist, ind) for (ind, dist) in enumerate(Pose.getDistance(testPosition, test) for test in self.drawPose)) #I do not understand this statement, but it works

return index

def reversePose(self):

#print("reversing pose...")

#print("drawPose: " + str(self.drawPose))

#print("basePose: " + str(self.basePose))

#print("changing current pose from " + str(self.currentPose))

self.currentPose = Pose.add(self.drawPose, Pose.neg(self.basePose))

#print("to " + str(self.currentPose))

description:

The StickmanSprite class is a system for drawing a stick man based on an adjustable Pose. It also functions as the tool for creating animations – if the game is launched while isEditing = True, you can drag around the stick man’s limbs to set his pose. When you middle-click the stick man, the current pose will be printed in the console. Do this once for each keyframe, then copy all the lines from the console into the animation library and give the animation a name. It’s a fast system.

The sprite also handles facial expressions by loading images from a directory based on the character’s name and specified emotion. The head tilts to match the body at all times based on simple mathematical rules. It also has a tail, so that we could use it as a monkey when necessary. The tail, like the head, is not directly controlled by the pose.

I chose “Tom” as the name of the starter character as a reference to Tom’s Diner. Although in testing my own audio codec, I went my own way and decided on “Don’t Stop” by Foster The People – The Fat Rat’s remix.

file pose.py:

class Pose:

LFOOT = 0

RFOOT = 1

WAIST = 2

NECK = 3

LHAND = 4

RHAND = 5

def __init__(self):

pass #it is possible, but pointless, to instantiate this empty class

@staticmethod

def neg(in1):

result = [[0,0],[0,0],[0,0],[0,0],[0,0],[0,0]] #empty ffwnhh pose

for i in range(len(in1)): #for all in the first dimension

for ii in range(2): #for all in the second dimension

result[i][ii] = -in1[i][ii] #set to the opposite of the input

return result[:]

@staticmethod

def add(in1, in2):

result = [[0,0],[0,0],[0,0],[0,0],[0,0],[0,0]] #empty ffwnhh pose

for i in range(len(in1)): #for all in the first dimension

for ii in range(2): #for all in the second dimension

result[i][ii] = in1[i][ii] + in2[i][ii] #add them to the answer

return result[:]

@staticmethod

def multiply(in1, in2):

result = [[0,0],[0,0],[0,0],[0,0],[0,0],[0,0]] #empty ffwnhh pose

for i in range(len(in1)): #for all in the first dimension

for ii in range(2): #for all in the second dimension

result[i][ii] = in1[i][ii] * in2 #multiply them into the answer

return result[:]

@staticmethod

def lerpPoints(point1, point2, distance):

displacement = [point2[0]-point1[0], point2[1]-point1[1]]

progress = [displacement[0]*distance,displacement[1]*distance]

result = [point2[0]-progress[0],point2[1]-progress[1]]

return result

@staticmethod

def lerp(pose1, pose2, distance):

#print("pose: Pose: lerp: ----------------------------------------------------------------------")

#print("pose: Pose: lerp: given a distance of " + str(distance))

#print("pose: Pose: lerp: given a pose1 of " + str(pose1))

#print("pose: Pose: lerp: given a pose2 of " + str(pose2))

displacement = Pose.add(pose2, Pose.neg(pose1))

#print("pose: Pose: lerp: resulting in pose1 " + str(pose1))

#print("pose: Pose: lerp: resulting in pose1 " + str(pose2))

#print("pose: Pose: lerp: displacement is now " + str(displacement))

progress = Pose.multiply(displacement, distance)

#print("pose: Pose: lerp: resulting in pose1 " + str(pose1))

#print("pose: Pose: lerp: resulting in pose1 " + str(pose2))

#print("pose: Pose: lerp: progress is now " + str(progress))

result = Pose.add(pose2, Pose.neg(progress))

#print("pose: Pose: lerp: resulting in pose1 " + str(pose1))

#print("pose: Pose: lerp: resulting in pose1 " + str(pose2))

#print("pose: Pose: lerp: result is now " + str(result))

return result[:]

@staticmethod

def getHeadRot(pose1): #thrice the head's X minus the sum of the X's of the feet and waist. a decentish approximation w/o trig.

return (3 * pose1[3][0]) - pose1[0][0] - pose1[1][0] - pose1[2][0]

@staticmethod

def getTailEnd(pose1): #a point which is exactly getTailLength(pose) to the left of getMidpoint(pose)

midpt = Pose.getMidpoint(pose1)

return [midpt[0] - Pose.getTailLength(pose1), midpt[1]]

@staticmethod

def getTailLength(pose1): #the average leg length

return Pose.getMean([Pose.getDistance(pose1[0],pose1[2]), Pose.getDistance(pose1[1],pose1[2])])

@staticmethod

def getMean(inputList): #takes any lengthy list

result = 0

for item in inputList:

result += item

result /= len(inputList)

return result

@staticmethod

def getMidpoint(pose1): #get the average of all points in a pose

list1 = [0,0,0,0,0,0]

list2 = [0,0,0,0,0,0]

for point in range(len(pose1)):

list1[point] = pose1[point][0]

list2[point] = pose1[point][1]

return [Pose.getMean(list1), Pose.getMean(list2)]

@staticmethod

def getDistance(in1, in2): #basic planar distance function: square root of ((x1-y1)^2 + (x2-y2)^2)

return ((in1[0] - in2[0])**2 + (in1[1] - in2[1])**2)**0.5

description:

The Pose class isn’t a type that’s used to hold poses – it’s a collection of methods for acting on poses, which are just arrays of 6 tuples (coordinate pairs for feet, waist, neck, hands). The Pose class can add, multiply, and most importantly Lerp two poses together. Lerp is called to find every frame of an animation based on stored keyframes.

file animationUtility.py:

from pose import *

def terpAnim(inputAnim, baseDuration, frameIndex):

keyPair = int(frameIndex//baseDuration)

#print("animationUtility: terpAnim: (keyPair=" + str(keyPair) + ",frameIndex=" + str(frameIndex) + ")")

#print("animationUtility: terpAnim: inputAnim[" + str(keyPair) + "] is " + str(inputAnim[keyPair]))

#print("animationUtility: terpAnim: inputAnim[" + str(keyPair+1) + "] is " + str(inputAnim[keyPair+1]))

return lerpFrames(inputAnim[keyPair], inputAnim[(keyPair+1)%len(inputAnim)], baseDuration, frameIndex % baseDuration)

def lerpFrames(inputPose1, inputPose2, baseDuration, frameIndex):

#print("animationUtility: lerpFrames: (baseDuration=" + str(baseDuration) + ",frameIndex=" + str(frameIndex) + ")")

#print("animationUtility: lerpFrames: working with pose1: " + str(inputPose1))

#print("animationUtility: lerpFrames: working with pose2: " + str(inputPose2))

result = Pose.lerp(inputPose1, inputPose2, float(frameIndex) / baseDuration)

#print("animationUtility: lerpFrames: resulting in pose1: " + str(inputPose1))

#print("animationUtility: lerpFrames: resulting in pose2: " + str(inputPose2))

return result

def frameCount(inputAnim, baseDuration):

return (keyframeCount(inputAnim) - 1) * baseDuration

def keyframeCount(inputAnim):

return len(inputAnim)

description:

The animation utility holds a few methods for playing animations, and is responsible for lerping the two appropriate keyframes to get any requested frame.

file animationLib3.py:

#06.24.16

anims = dict([

('IDLE',

[

[[0,0],[0,0],[0,0],[0,0],[0,0],[0,0]],

[[0,0],[0,0],[0,0],[0,0],[0,0],[0,0]],

[[0,0],[0,0],[0,0],[0,0],[0,0],[0,0]]

]

),

('JITTERTEST',

[

[[5,8],[-10,-14],[0,0],[0,0],[4,4],[20,8]],

[[3,11],[7,4],[0,0],[0,0],[-5,0],[2,-11]],

[[-4,-2],[-2,11],[0,0],[0,0],[-8,8],[-4,-5]]

]

),

('LIEDOWN',

[

[[-14.0, -65.0], [-74.0, -55.0], [-26.0, -2.0], [10.0, 12.0], [56.0, -11.0], [17.0, 0.0]],

[[0,0],[0,0],[0,0],[0,0],[0,0],[0,0]]

]

),

('WALK',

[

[[0, 0], [0, 0], [0, 0], [0, 0], [0, 0], [0, 0]],

[[32.0, -2.0], [-31.0, -4.0], [0, 0], [0, 0], [40.0, -4.0], [-36.0, -5.0]]

]

),

('JUMP',

[

[[32.0, -23.0], [-29.0, -23.0], [0, 0], [0, 0], [37.0, -4.0], [-34.0, -9.0]],

[[0, 0], [0, 0], [0, 0], [0, 0], [0, 0], [0, 0]]

]

)

])

def getAnim(keyword):

return anims[keyword.upper()]

description:

These are the basic animations that made it into our final project. The speed couldn’t be set for each animation, and the movement of the character around the screen was handled by a different class – something I wasn’t aware of until after the game was finished. I would’ve incorporated that motion into these animations, but it’s probably for the best; this simple collection can’t be broken be resizing the window.

file frameRate.py:

"""

============== USAGE ==============

import using:

from frameRate import FrameRate

create a FrameRate object to handle your frames:

frames = FrameRate(number of frames per second)

at the end of every frame, do:

frames.finishFrame()

this will hold the frame rate as near to the rate you specified as possible.

"""

import pygame.time

import time

class FrameRate:

def __init__(self, rate):

self.bias = Biaser()

self.t1 = pygame.time.get_ticks()

self.targetWait = 1000/rate

def finishFrame(self):

self.t2 = pygame.time.get_ticks()

self.bias.addInput(self.t2 - self.t1)

duration = max(0, self.targetWait - self.bias.getValue())

time.sleep(duration/1000)

self.t1 = pygame.time.get_ticks()

class Biaser:

def __init__(self):

self.value = 1.0/15.0

self.last = 1.0/15.0

self.shortCutoff = 1.0/120.0

self.longCutoff = 1.0/12.0

self.feedback = 0.5

self.wetness = 0.7

def getValue(self):

result = (self.value*self.wetness) + (self.last*(1-self.wetness))

return result

def addInput(self, in1):

self.value = (self.value*self.feedback) + (max(min(in1, self.longCutoff), self.shortCutoff)*(1-self.feedback))

self.last = in1

description:

The first class I wrote that wasn’t part of my StickFigure. This object dynamically adjusts the wait period at the end of each frame to prioritize a stable framerate over a high one. It has cutoff values so that it can’t be majorly affected by crazy frames that take longer than 1/12 second (if the game is paused) or shorter than 1/120 second (supposing we accidentally called draw multiple times in a row). Thus it never needs to be turned off to preserve its vision of the perfect framerate.

And you’ll notice I’m getting better at commenting my code as time passes.

file soundEngine.py:

import pygame

import pygame.mixer

import time

import os

"""

============ USAGE ===========

-import using:

from soundEngine import *

-initialize a SoundEngine:

self.yourMusicThing = SoundEngine()

-load music and effect before you need to use them:

self.yourMusicThing.loadMusic()

self.yourMusicThing.loadEffect()

-play sounds from \sound\effect\ using:

self.yourMusicThing.playEffect('sound ID')

(when adding new sounds to \sound\effect\

make sure to add them to the dictionary as well)

-switch to new music from \sound\music\ using:

self.yourMusicThing.setMusic("song ID")

(songs are:

- 'TITLE'

- 'AMBIENT'

- 'BATTLE'

- 'BOSS'

- 'WIN'

- 'LOSE')

switching to new music will stop the old music.

"""

class SoundEngine:

pygame.mixer.init(24000, 16, 2, 4096)

def __init__(self):

print("sound engine: initializing...")

self.soundSubdir = "\\sound"

self.musicSubdir = "\\music\\"

self.effectSubdir = "\\effect\\"

self.container = ".ogg"

self.currentMusic = 'AMBIENT'

self.fadeDuration = 250

print("sound engine: initialized")

def loadMusic(self):

print("sound engine: loading music...")

print(" \"BlockMan\", \"Cyborg Ninja\", \"Danger Storm\", \"Pixelland\", \"Show Your Moves\"\n Kevin MacLeod (incompetech.com)\n Licensed under Creative Commons: By Attribution 3.0\n http://creativecommons.org/licenses/by/3.0/\"")

self.music = dict([

#('TITLE', self.getSound(self.musicSubdir, "incompetech-spazzmatic_polka")),

('AMBIENT', self.getSound(self.musicSubdir, "incompetech-pixelland")),

('BATTLE', self.getSound(self.musicSubdir, "incompetech-cyborg_ninja")),

('BOSS', self.getSound(self.musicSubdir, "incompetech-blockman")),

('LOSE', self.getSound(self.musicSubdir, "incompetech-danger_storm")),

('WIN', self.getSound(self.musicSubdir, "incompetech-show_your_moves"))

])

print("sound engine: music loaded")

def loadEffect(self):

print("sound engine: loading effect...")

self.effect = dict([

('TEST', self.getSound(self.effectSubdir, "pocketTestTone"))

])

print("sound engine: effect loaded")

def getSound(self, subdir, soundName):

return pygame.mixer.Sound(self.getFilepath(subdir, soundName))

def getFilepath(self, subdir, soundName):

result = os.path.realpath(os.getcwd()) + self.soundSubdir + subdir + soundName + self.container

return result

def setMusic(self, musicName):

print("sound engine: switching music to " + musicName)

self.stopMusic()

self.currentMusic = musicName

self.startMusic()

def playEffect(self, effectName):

self.effect[effectName].play(0,3000,0)

def stopMusic(self):

self.music[self.currentMusic].fadeout(self.fadeDuration)

def startMusic(self):

self.music[self.currentMusic].play(-1, 0, self.fadeDuration)

description:

This sound engine simplifies the loading of songs and sound effects. It allows us to change songs at random, and without worry of having two playing at once (remember MC-35856? that’s still a thing)

When the game starts, it credits Kevin MacLeod for his amazing music used under a Creative Commons Attribution license v3.0.

When I put the soundtrack together I was able to squeeze everything down to 32kbps or so with Ogg Vorbis, which became my new favorite audio codec. So the game takes only a second to launch.

During my stay at Rose, I improved my ability to work in a group with other programmers. And rediscovered that it’s fun. I gained a fascination for python. There is no language but python. All other languages are trash. I would delete them from my mind if python were faster by a factor of just a few hundred. (no, just kidding. I never delete anything)

At the end, we were awarded 5th place for best project, out of more than 40, spanning many fields of engineering.